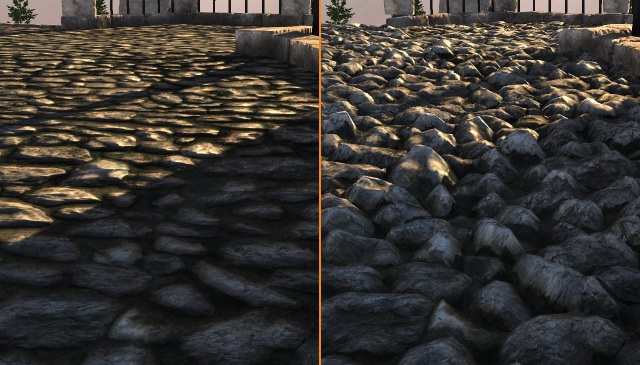

As you can see, even if the cost of post process is static, now at 11 ms it’s much more significant (compared to other passes). The harsh austerity of 90 fps Figure: GPU Visualizer showing an 11 ms frame. But it still won’t be enough to land at the target of 11 ms – the 90 fps recommended for a VR kit like Oculus or Vive. You can easily assume that by disabling the post process entirely we’ll end up with 12 ms per frame. So we still have a cost of 3 ms here – but now it’s a significantly bigger chunk of the time we can spare. The post process time didn’t change (because its cost is pixel-bound). Now the engine has to fit in half of the time - 16.67 ms. Figure: GPU Visualizer showing a 16 ms frame. Figure: GPU Visualizer showing a 33 ms frame. In the right part of the colored bar, you can also see the post process using 3 ms. It has a lot of transparency, heavy lighting (multiple dynamic light sources with big radius). The 30 fps scene can allow for many costly features to be used at once. Several scenes were measured, each optimized for different refresh rates: 30, 60 and 90 fps. It breaks down the work done by the GPU on a single frame into specific sections, like shadows or transparency. This means that the graphics card can use most of this 33 ms time window.įollowing screenshots show the output of a tool built into Unreal, GPU Visualizer. They work (mostly) simultaneously, allowing the CPU to return to the gameplay code, while the GPU begins processing meshes and shaders. The exact pipeline is explained in detail in “GPU and rendering pipelines”. The latter does the majority of calculations. The CPU has a role of a “manager”, preparing data and sending commands to the GPU. The work on graphics one is split between CPU and GPU(s). Otherwise the player will experience fps drops.Īiming for 30 fps leaves the engine with over 33 ms to complete all gameplay code, audio code and graphics rendering. The values above show us that every game has a certain limit of time per frame. If the software exceeds the time limit, it falls below the desired refresh rate (or even risks losing a V-Sync window). Each frame rate translates to a maximum time it should take to render a frame. These represent common expected refresh rates for various game categories: a “cinematic” adventure (30 fps), a fast-paced action game (60 fps) and a VR product (90 fps or more for reducing motion sickness). Time in milliseconds = 1000 ms / frames per second How do we get the cost of each frame in milliseconds? Running the game without additional features allowed to complete about 50 frames in a second on average. Calculating feature costs with milliseconds The actual cost of using these features was a period of time, not a number of frames. This apparent paradox of negative frame rate arises from the fact that we didn’t compare costs here, even if this was our intention. Now what if there was a third feature of such high requirements, let’s say, HBAO? What we should end up with is:Ĥ9.6 fps - 23 fps (HW) - 23 fps (DoF)- 23 fps (HBAO) = -19.4 fps? Let’s try subtracting these costs from the original frame rate.Ĥ9.6 fps - 23 fps (HW) - 23 fps (DoF) = 3.6 fps? Can we calculate final fps from this information, without using milliseconds? We enable both hair and DoF at the same time. But let’s assume we have another feature, with an identical computational cost – for example Depth of Field. The conclusion we can draw from these numbers is that enabling HairWorks-simulated hair in Witcher 3 can make the game lose up to 23 frames per second, depending on the card.

However, AMD suffers an even larger hit, losing around 47 per cent of its average frame-rate - its 49.6fps metric slashed to just 26.3fps. In our test case, the GTX 970 lost 24 per cent of its performance when HairWorks was enabled, dropping from an average of 51.9fps to 39.4fps. Here’s a quote from an article about NVidia HairWorks 1:Īs you might expect from a technology that is said to render tens of thousands of tessellated strands of hair, the performance hit to the game is substantial - whether you are running an Nvidia or AMD graphics card. So why do game developers measure in milliseconds instead? Game C is more demanding than D, because the same hardware is able to calculate only 40 frames each second compared to 60 in the other title.įrames per second seem to be the most popular metric for performance. Graphics card A is supposed to outperform B, because it produces twice as many frames in the same period of time. The results are expressed in frames per second. In hardware reviews, competing products are compared by running benchmarks based on the same games. Are frames per second the ultimate metric? It’s also regularly updated, while videos stay unchanged since their upload. Note: Every chapter of this book is extended compared with the original video.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed